type

status

date

slug

summary

tags

category

password

icon

Domain & Institution

Author

Priority

Abstract

Creation Date

June 4th

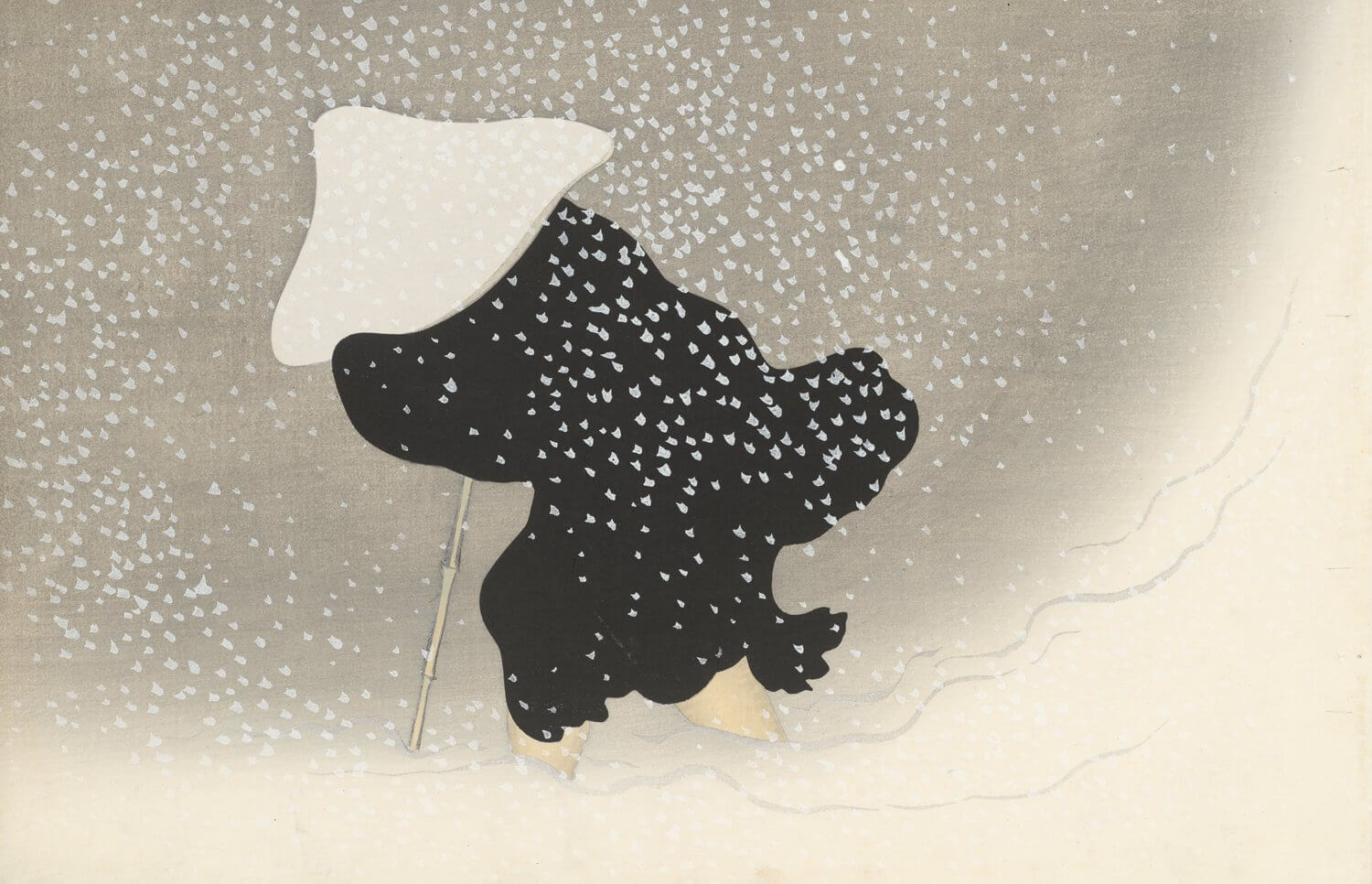

- Today, I took a careful look at the mlx training framework launched by Apple official, and found it very interesting. It can train and deploy models locally on MacBook, which feels very cool. I used my 32GB M2 Max notebook to test the Qwen1.5-0.5B model, ran the official sample data, and it worked, which I think is quite good. If you want to train models in the future but suffer from lack of GPU resources, mlx is a very good choice. It's just that if you want to train a slightly larger model in the future, you may need to choose a very high configuration when changing computers. I guess there should be no problem training a 7B model with a 32GB MacBook LoRA? 😂 Below is the screenshot of my training:

- Today, I also looked at the official code examples of CrewAI and LLM From Scratch, which are quite useful for me to familiarize with the code, after all, I haven't written code for a long time😂

June 2nd

I recently read the official documents of CrewAI and AutoGen, and I thought Agent was very cool. Just imagine how interesting it is to see two models arguing in a conversation.

So one of my recent ideas is to use Agent to complete an entire writing process. I feel that CrewAI might be better for the framework? But I'm not sure, I need to take a closer look, but I think this idea should be feasible.

Well, this is just one of my whimsical ideas, I'll record it first. 🤣

- Author:BubbleBrain

- URL:https://chengshengddeng.com/article/pieces-of-thoughts-in-june

- Copyright:All articles in this blog, except for special statements, adopt BY-NC-SA agreement. Please indicate the source!

Relate Posts

June 4, Pieces of Thoughts in June

June 4, Pieces of Thoughts in June